Video Anomaly Detection From Edge to Cloud With Qdrant

Thierry Damiba

·March 15, 2026

On this page:

What if your surveillance cameras could detect fights, accidents, intrusions, and equipment failures without ever being trained on those specific events?

Traditional video classifiers need labeled examples of every anomaly type you want to catch. That breaks in the real world. You can’t enumerate everything that could go wrong, and the moment something new happens, your model scores 0.0.

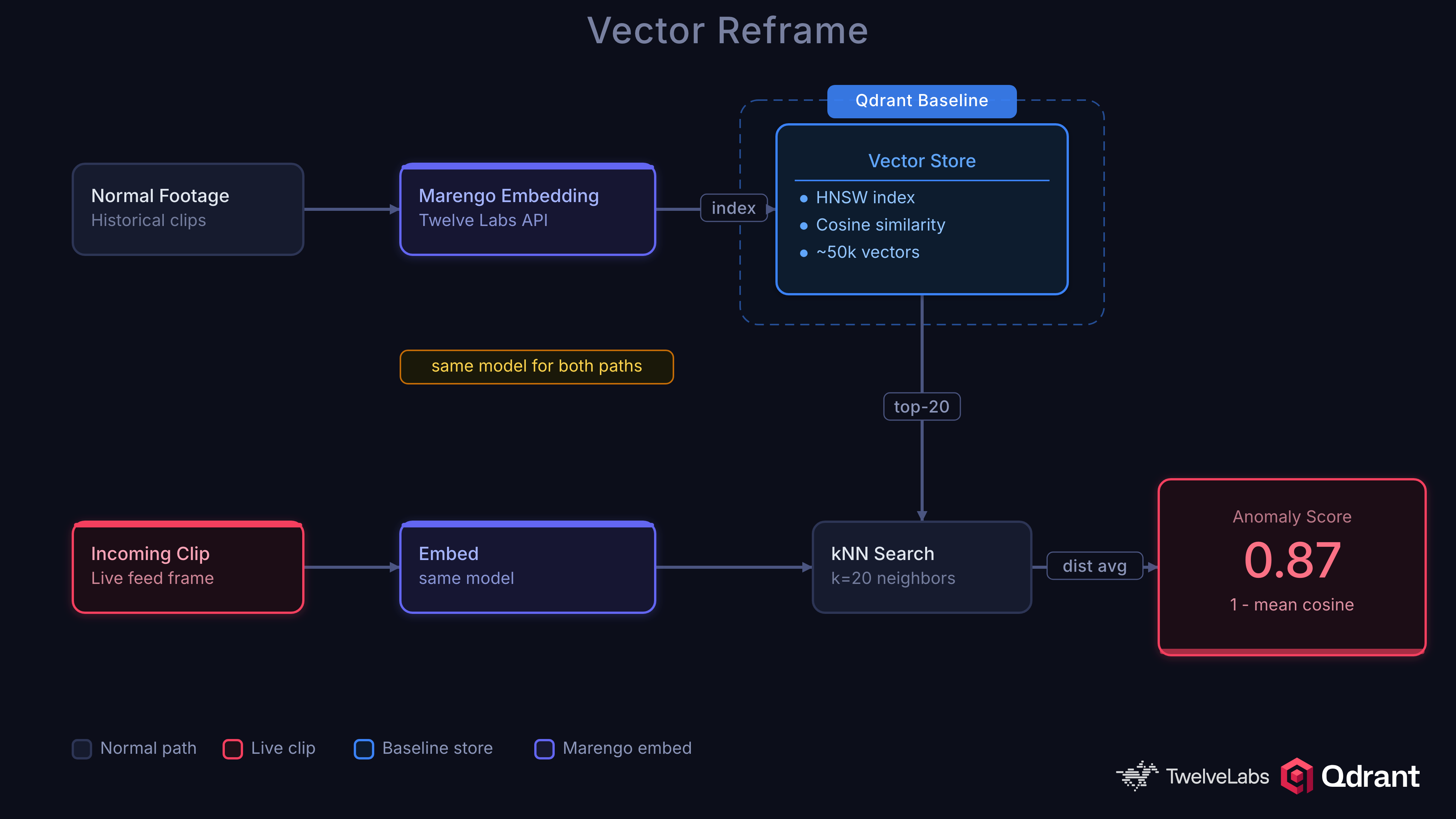

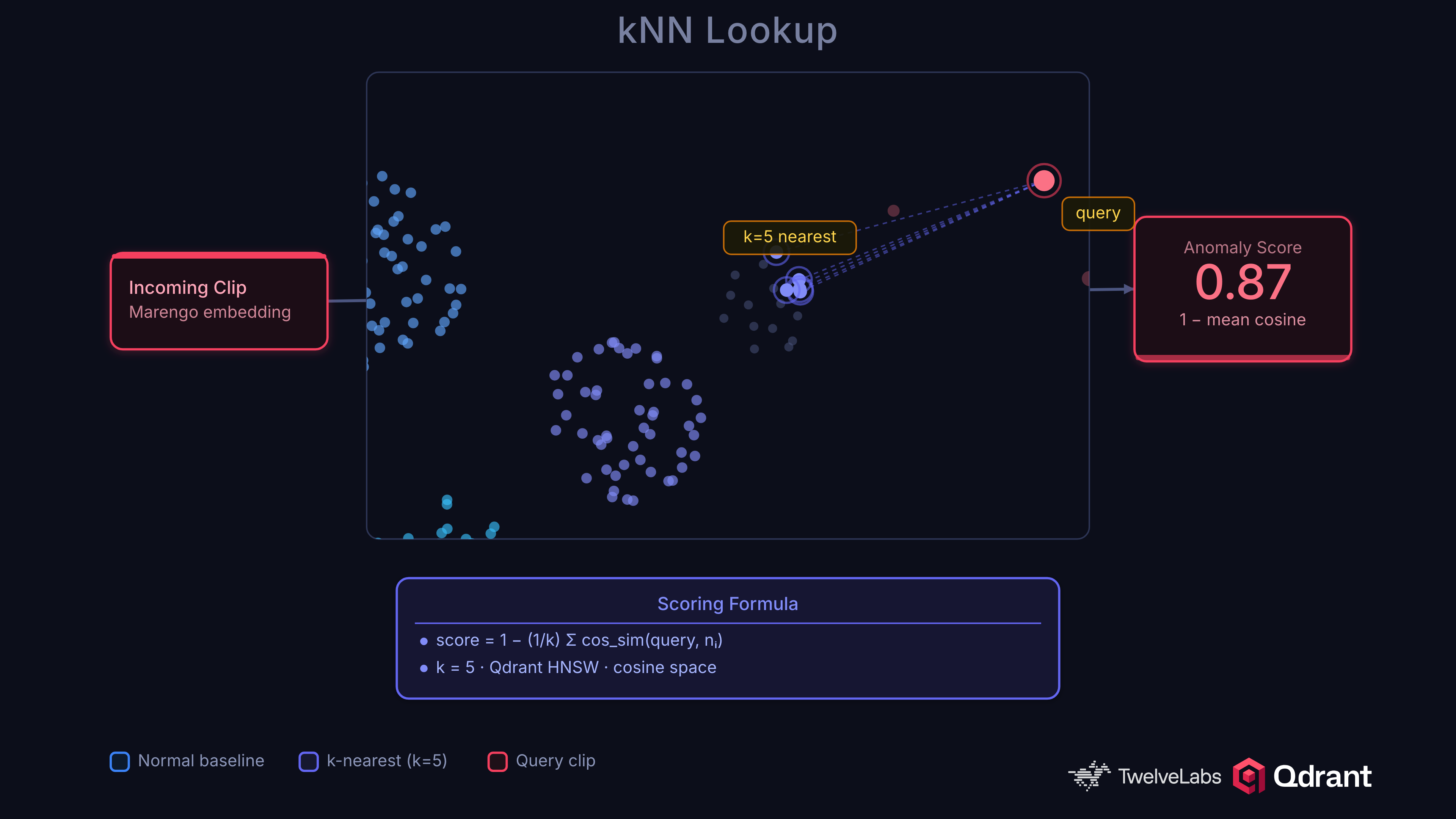

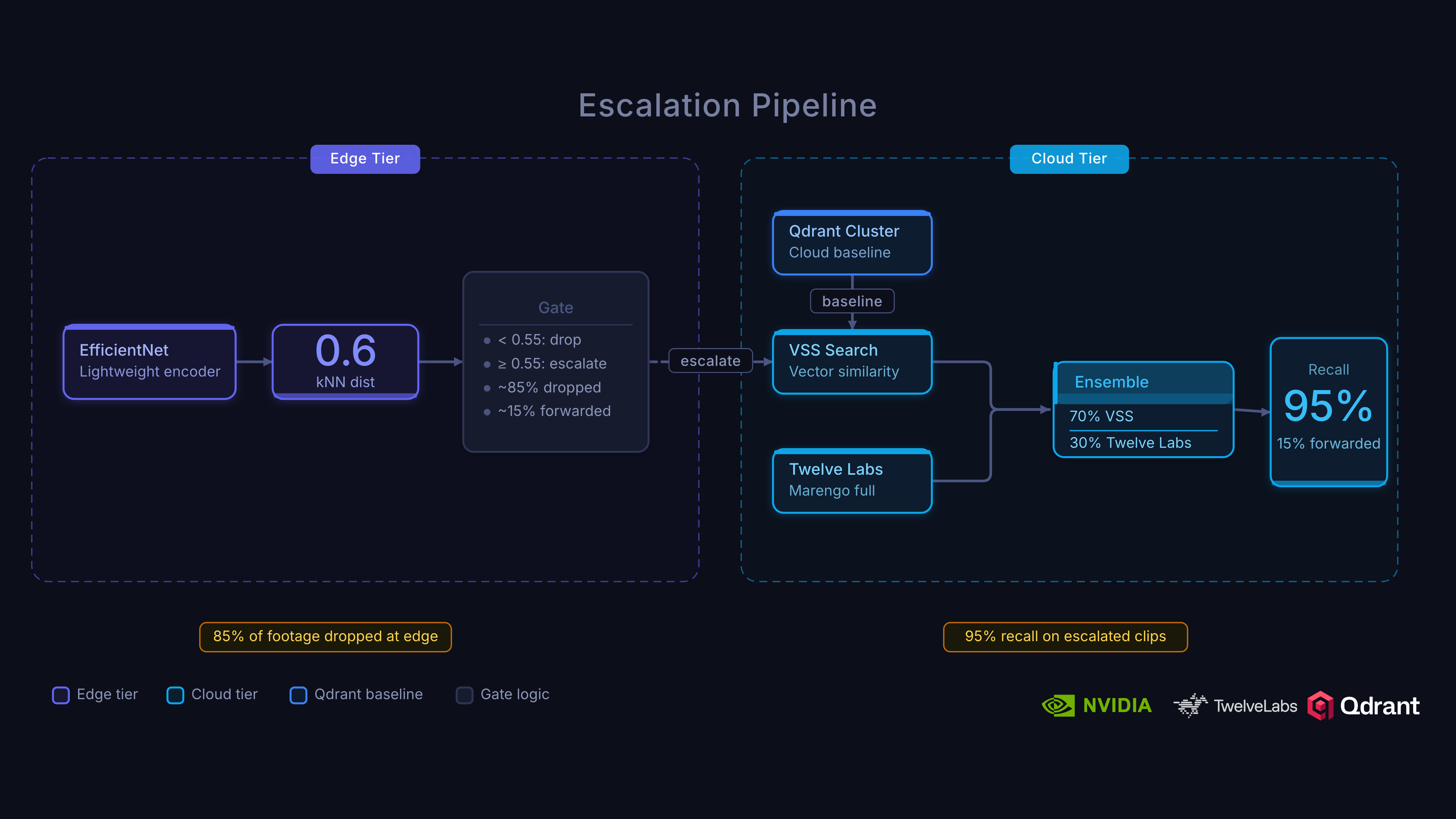

We built a system that takes a different approach: reframe anomaly detection as a nearest-neighbor search problem. Instead of asking “is this a fight?”, ask “how different is this from what we normally see?” That question is a vector distance calculation, and Qdrant answers it in sub-millisecond time.

The Idea

Index video embeddings of normal activity into Qdrant as a baseline. When a new clip arrives, embed it and search for its nearest neighbors. If the clip is far from anything in the baseline, it’s anomalous. No anomaly labels required, no retraining when new anomaly types emerge.

This works because the space of “normal” is learnable, but the space of “abnormal” is unbounded. A binary classifier trained on 13 crime categories will miss a forklift collision or a pipe burst. kNN distance from normal catches anything unusual by definition.

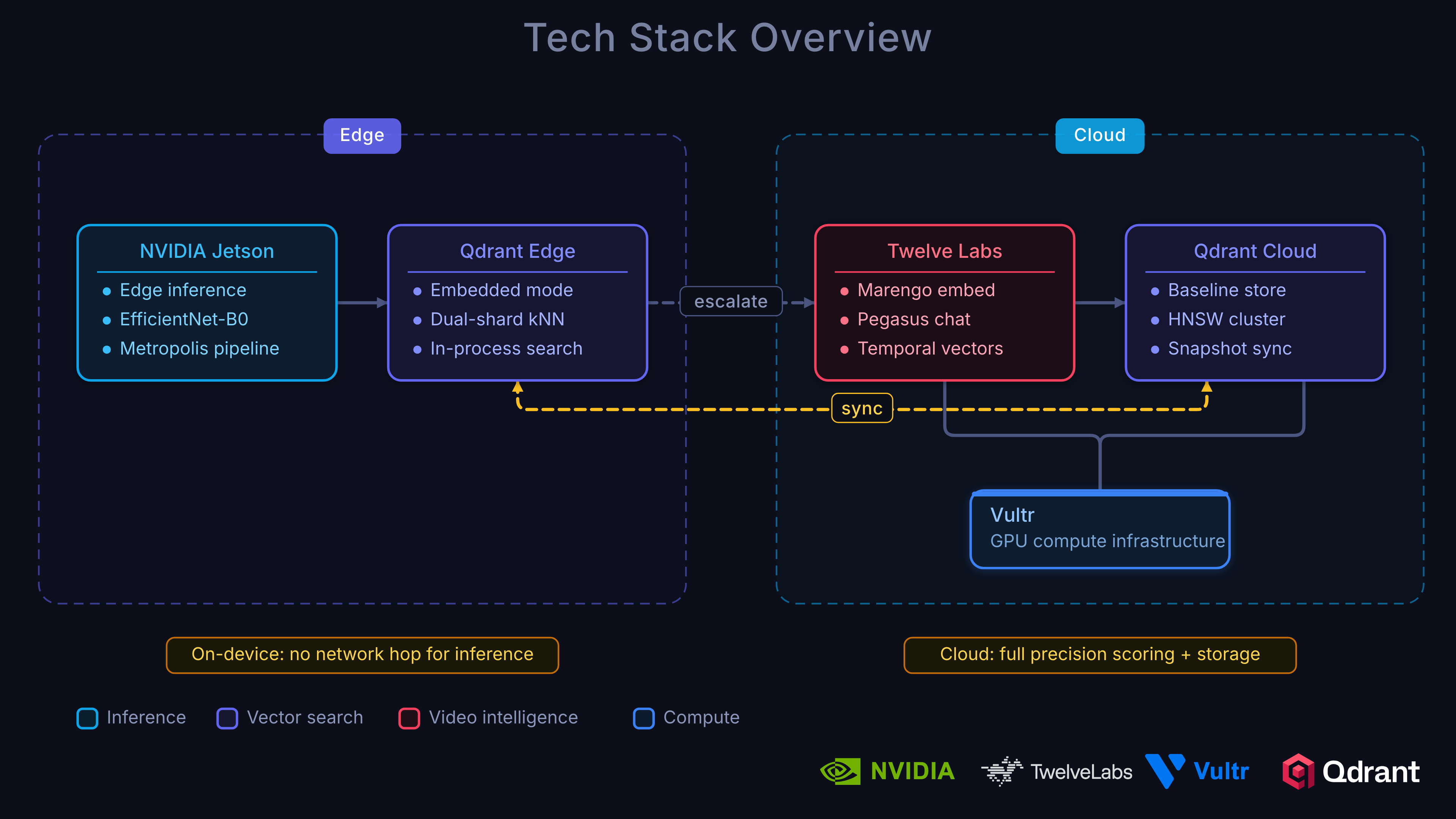

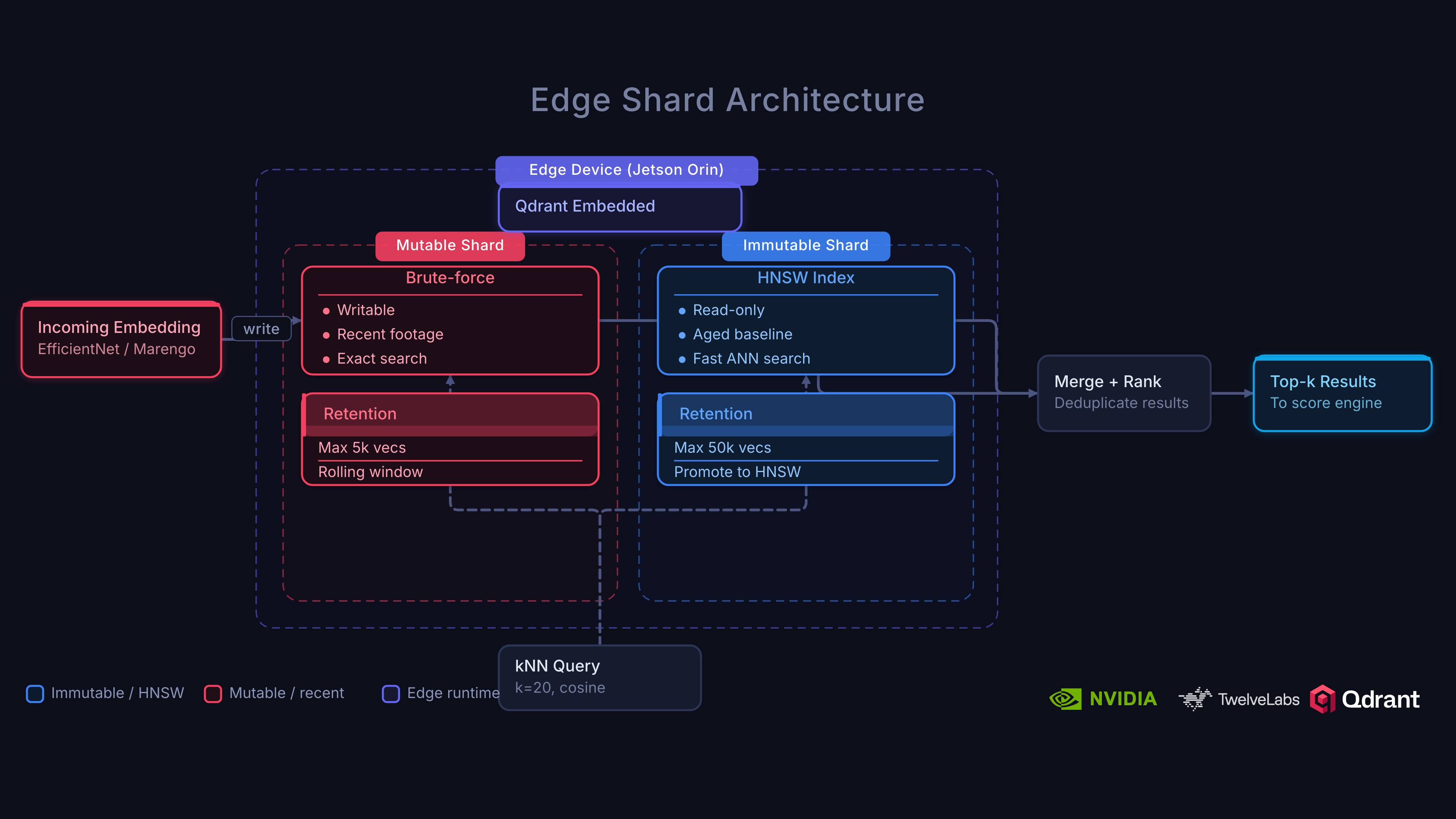

The Stack

Qdrant Edge runs directly on NVIDIA Jetson devices with a two-shard architecture: an immutable HNSW shard synced from the cloud as the baseline, and a mutable shard for live writes. Sub-millisecond kNN lookups with no network dependency and full offline resilience.

Twelve Labs Marengo 3.0 provides the video embeddings. Unlike frame-level models like CLIP (0.23 AUC-ROC), Marengo processes temporal dynamics, audio, and scene context as a unified signal (0.97 AUC-ROC). One model handles both anomaly scoring and semantic search across your video archive.

NVIDIA Metropolis VSS orchestrates GPU-accelerated video ingestion on Vultr Cloud GPUs: embeddings, VLM captioning, audio transcription, and CV pipelines running together.

Vultr provides the cloud GPU infrastructure that powers the entire cloud tier. Dedicated NVIDIA GPUs with per-hour billing and a global data center footprint keep latency low and costs predictable for edge-to-cloud escalation.

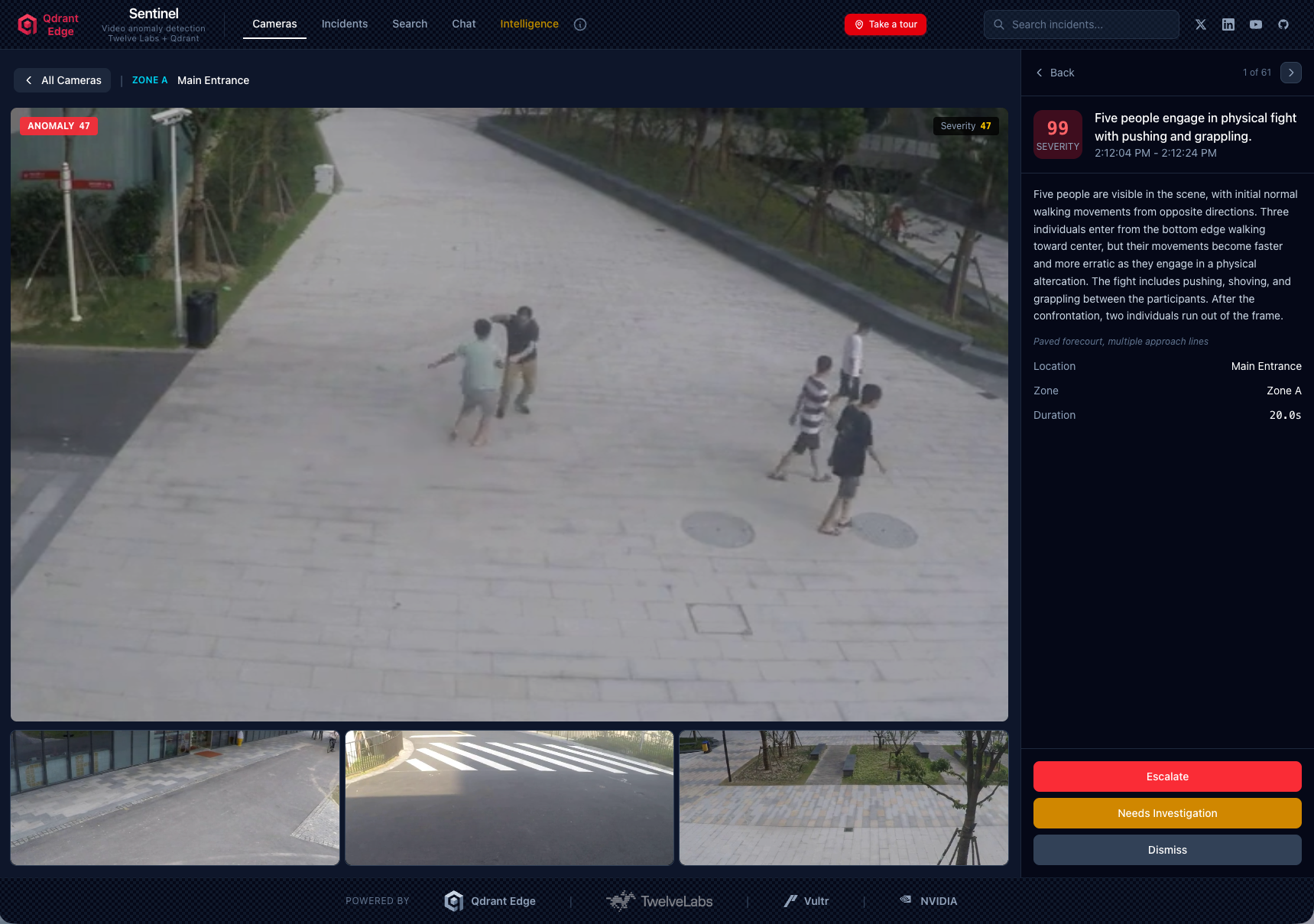

What It Produces

The system transforms live surveillance streams into:

- Real-time anomaly scores using kNN distance from the normal baseline, with temporal smoothing and hysteresis thresholding to filter noise

- Incident reports with severity scoring, timelines, and natural-language explanations of what happened

- Semantic video search across all cameras and time periods. “Show me unusual activity at the north entrance last week.”

- Interactive Q&A about detected events, grounded in actual video content

- Edge-to-cloud escalation that reduces cloud processing volume by ~6x while catching ~95% of true anomalies

Why Edge Matters

A 50-camera deployment generates 432,000 clips per day. Sending every clip to the cloud for embedding and scoring is neither fast enough nor cost-effective. Qdrant Edge triages locally on-device: only clips scoring above threshold get escalated to the cloud tier for higher-fidelity analysis with Marengo 3.0 and ensemble scoring.

The result is a system that scales with camera count without scaling cloud costs linearly.

Build It Yourself

We published a full 3-part tutorial that walks through every component of this architecture with working code: from kNN anomaly detection theory through Qdrant Edge’s two-shard design to baseline governance and Vultr deployment.

- Part 1: Architecture, Twelve Labs, and NVIDIA VSS

- Part 2: Edge-to-Cloud Pipeline

- Part 3: Scoring, Governance, and Deployment

GitHub: qdrant/video-anomaly-edge

Interactive Demo: qdrant-edge-video-anomaly.vercel.app

The concepts extend beyond surveillance. Manufacturing safety, retail analytics, traffic monitoring: anywhere you need to detect “something unusual” without defining every possible anomaly in advance.