Qdrant 2025 Recap: Powering the Agentic Era

Daniel Azoulai

·December 17, 2025

On this page:

This year was a defining year for Qdrant. Not because of a single feature or launch, but because of a clear shift in what the platform enables. As AI systems moved from static assistants to autonomous, multi-step agents, the demands placed on retrieval changed fundamentally. Speed alone was no longer enough. Production systems now require precise relevance control, predictable performance at scale, and the flexibility to run wherever data and users live.

We focused on meeting those requirements head-on. Rather than shipping disconnected features, we invested in deep, system-level improvements that strengthen Qdrant as long-term infrastructure. The result is a retrieval engine designed for real-world AI workloads: agentic, multimodal, hybrid, cost-efficient, and enterprise-ready.

Keep reading for a rundown.

Product Focus: Built for Production, Designed for Agents

Across customers, partners, and open-source users, the same patterns kept surfacing: relevance breaks down under complex queries, costs explode at scale, multi-tenancy becomes fragile, and deployment constraints slow teams down.

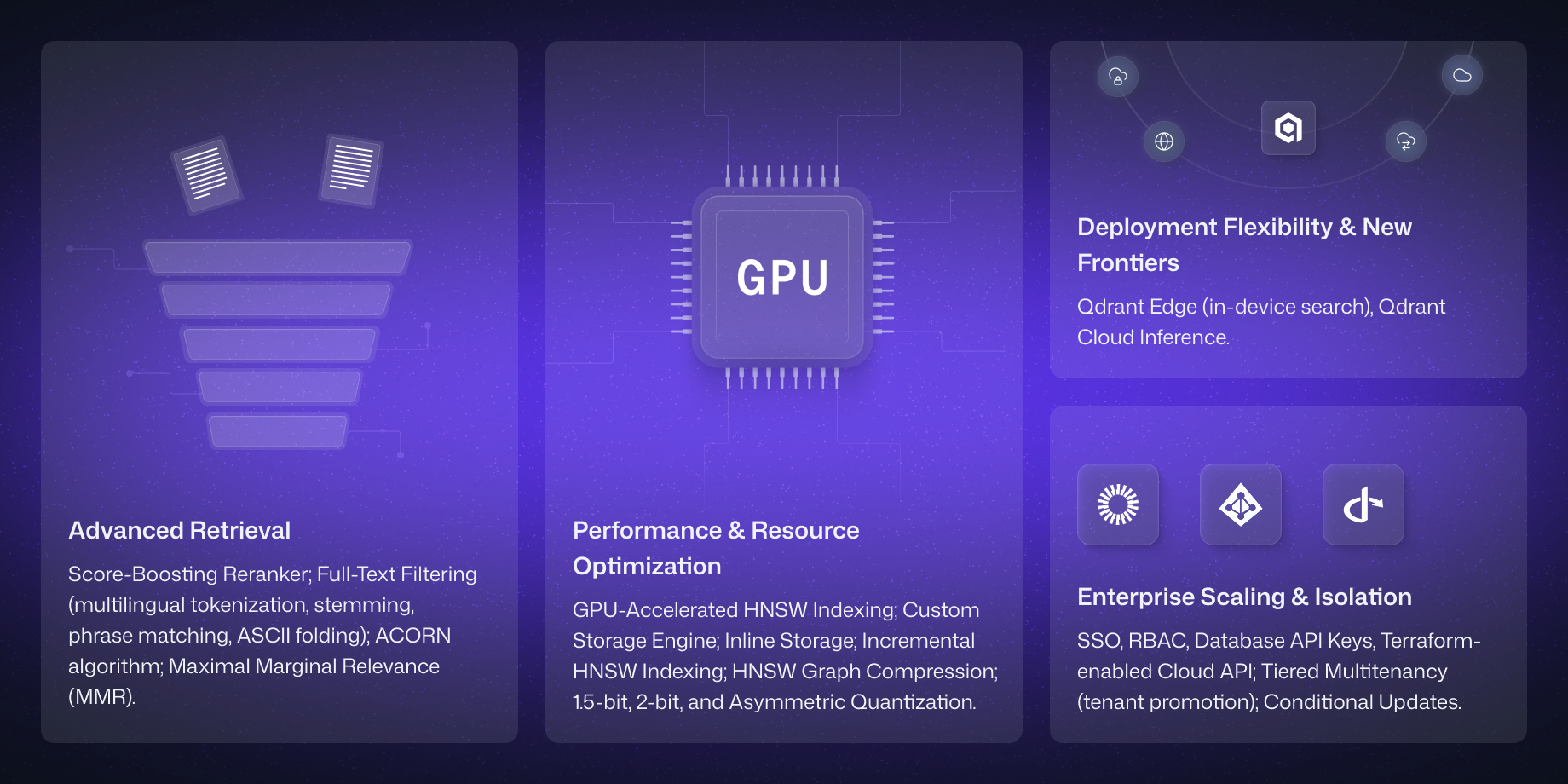

In response, our 2025 roadmap centered on four tightly connected capability areas:

• Advanced Retrieval to move beyond basic vector similarity

• Performance & Resource Optimization to control cost without sacrificing speed

• Enterprise Scaling & Isolation to support shared, mission-critical infrastructure

• Deployment Flexibility to run in cloud, hybrid, or even edge environments

Advanced Retrieval

In 2025, we focused on giving teams explicit control over retrieval quality as applications moved beyond basic semantic search. Our new capabilities make relevance more explainable, tunable, and aligned with real user intent, especially in agentic and hybrid search workflows.

Related enhancements:

• Score-Boosting Reranking allowing the blending of vector similarity with business signals

• Full-Text Filtering which brought native multilingual tokenization, stemming, and phrase matching

• ACORN algorithm for higher-quality filtered HNSW queries

• Maximal Marginal Relevance (MMR) to balance relevance and diversity

• ASCII folding for improved multilingual recall

Performance & Resource Optimization

To support large, cost-sensitive workloads, we targeted the biggest performance bottlenecks in production systems. New improvements help teams scale indexing and querying without over-provisioning memory or compute.

Related enhancements:

• GPU-Accelerated HNSW Indexing unlocks up to an order-of-magnitude faster ingestion

• Inline Storage embedded quantized vectors directly into the graph to dramatically improve disk-based search performance

• Custom storage engine optimized for predictable low-latency access

• Incremental HNSW indexing for upsert-heavy workloads

• HNSW graph compression to reduce memory footprint

• Expanded Quantization options, including 1.5-bit, 2-bit, and asymmetric quantization

Enterprise Scaling & Isolation

As Qdrant became shared infrastructure inside larger organizations, we focused on multitenancy, governance, and enterprise needs.

Related enhancements:

• Tiered Multitenancy enables efficient support for both small and large tenants within a single system

• Single Sign-On (SSO) and role-based access control (RBAC)

• Granular database API keys

• Terraform-enabled Cloud API for automation and governance

• Conditional updates for safe concurrent workflows and embedding migrations

Deployment Flexibility & New Frontiers

We also expanded where and how Qdrant can run to match modern AI architectures. Qdrant Cloud Inference unified embedding generation and vector search into a single managed workflow, simplifying hybrid and multimodal pipelines. Qdrant Edge extended retrieval directly onto devices, enabling low-latency, deterministic search without a server dependency.

• Native support for dense, sparse, and image embeddings

• Hybrid retrieval pipelines without external inference infrastructure

• Consistent APIs across cloud, hybrid, and edge deployments

Enabling Retrieval for the AI Era

Our customers validated our direction as we invested in more capable retrieval for AI. Below are just some of the companies that are use Qdrant.

• Tripadvisor activated a dataset of over one billion reviews to power its AI Trip Planner, driving 2-3x more revenue from users engaged with the new generative experience.

• OpenTable reinvented dining discovery by building its AI Concierge on Qdrant, utilizing sparse embeddings to precisely filter over 60,000 restaurants for natural language queries.

• HubSpot selected Qdrant to scale Breeze AI, its flagship intelligent assistant, ensuring highly personalized, context-aware responses without compromising on speed or reliability.

A Thriving Community

In 2025, the Qdrant community achieved high-velocity. Our ecosystem grew from a strong base of early adopters into a global community of tens of thousands of engineers, researchers, and builders shaping the future of AI retrieval together.

Community Engagement

Working with our community, we were able to create spaces together where practitioners could learn, share, and build.

Vector Space Day 2025 marked our first global Qdrant conference. Hosted at the Colosseum Theater in Berlin, the event brought together more than 400 in-person attendees, alongside hundreds more participating in a virtual hackathon. Talks and discussions spanned RAG, agentic memory, and distributed systems, with speakers from LlamaIndex, Vultr, and Google DeepMind.

To help developers bridge the gap between “Hello World” and production, we launched Qdrant Essentials. This comprehensive educational program covers vector search fundamentals, quantization strategies, and hybrid retrieval best practices. Thousands of developers have already learned from the course.

We also re-launched Qdrant Stars, our ambassador program recognizing community members who create tutorials, speak at meetups, and mentor new users. Contributors Leaders like Pavan Kumar Mantha and Tarun Jain became the backbone of local Qdrant communities, driving meetups from Hyderabad to San Francisco.

Momentum by the Numbers

The scale of community engagement in 2025 reflects accelerating adoption and collaboration:

• GitHub surpassed 27,000 stars

• Added 35 integrations, including an official n8n node.

• Our Github Issues tab evolved into an active collaboration space, with community contributions such as FastEmbed enhancements landing directly in core workflows

• Discord grew to 8,000+ members, serving as both a support hub and a place to share projects and wins

• On LinkedIn, Qdrant appears in over 50 technical deep dives per day

• Sponsored or supported 50+ AI and data events worldwide, including ODSC West and /function1

Looking Ahead: The 2026 Roadmap

The progress in 2025 was shaped by real feedback and real use cases from the community. Building on that momentum, our 2026 roadmap doubles down on efficiency, agent-native retrieval, and enterprise-scale operability.

• Efficiency & Scale: 4-bit quantization, read-write segregation, block storage integration

• Advanced Agent Retrieval: relevance feedback, expanded inference capabilities

• Robust Enterprise Deployment: fully scalable multitenancy, faster horizontal scaling, read-only replicas

If you’re building the next generation of intelligent applications, or the infrastructure that supports them, Qdrant is ready. Explore open roles on our team or start a free instance on Qdrant Cloud today.

Thanks for joining our mission!